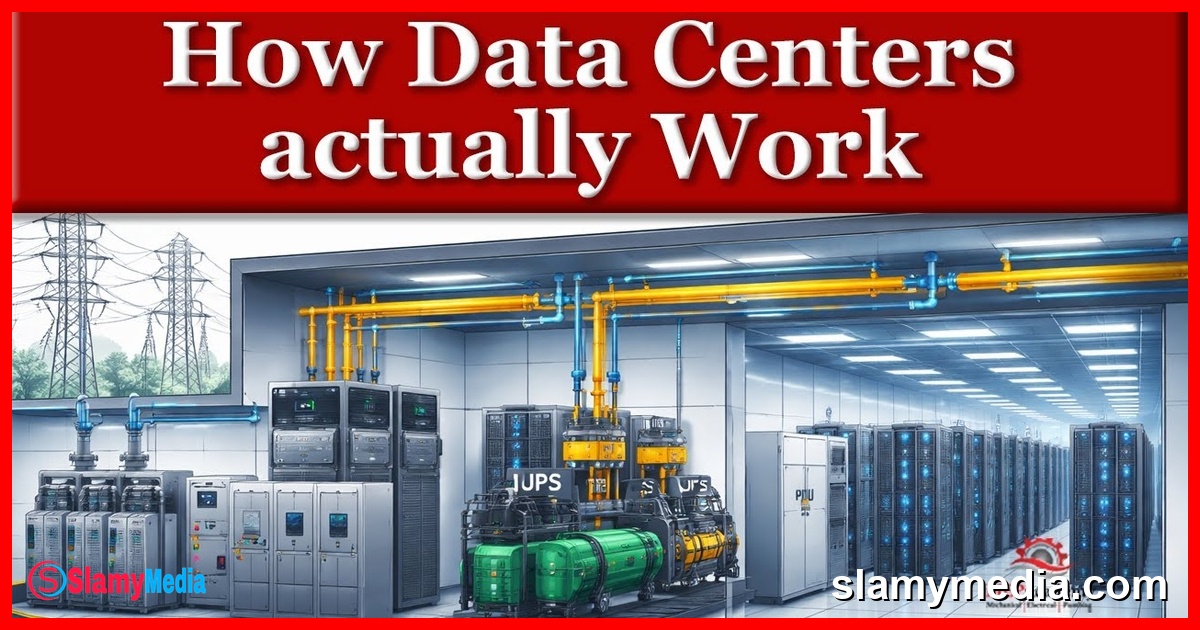

Every email you send, every video you stream, and every file stored in the cloud exists somewhere physically. Behind the internet is a network of buildings filled with servers, electrical infrastructure, and cooling systems working continuously to keep data moving. These buildings are called data centers. In this article series, we are going to explain how data centers actually work from a mechanical and electrical perspective, focusing on the systems that keep servers powered, cooled, and operating without interruption. This first article provides the big picture overview. We will explain what a data center does, why these facilities consume so much energy, and why mechanical and electrical systems are the most critical part of the building. In the following articles, we will begin breaking down each system in greater detail.

Key Takeaways

- Data centers are critical infrastructure that manage the storage, processing, and distribution of digital information.

- The energy consumption of data centers is a major concern due to the continuous operation of servers and the heat they generate.

- Redundancy and reliability are paramount in data center design to ensure uninterrupted service and equipment longevity.

The Core Function of Data Centers

At its simplest level, a data center is a facility designed to store, process, and distribute digital information. Inside the building are rows of servers, which are specialized computers responsible for storage, computing, and network traffic. Unlike office computers that operate intermittently, servers operate continuously. They run 24 hours a day, every day of the year. This creates an important reality: servers consume large amounts of electricity, and nearly all of that electrical energy eventually becomes heat.

While most people think of data centers as IT facilities, from an engineering perspective, they are really energy conversion buildings. Electricity goes in, computing work is performed, and heat comes out. The entire facility exists to manage that process safely and reliably. Every data center, regardless of size, must solve three fundamental problems: continuous power, continuous cooling, and continuous operation.

Continuous Power: The Lifeline of Data Centers

The first and most critical challenge is ensuring continuous power. Servers cannot simply shut down when power is lost. Even brief interruptions can cause data loss or service outages, affecting thousands or millions of users. As a result, power systems must remain available even when equipment fails, or utility power is interrupted.

Power reliability in data centers is achieved through multiple layers of redundancy, including uninterruptible power supplies (UPS), diesel generators, and battery backup systems. These systems ensure that power is never interrupted, even during grid outages or equipment failures. The goal is not just to maintain power but to do so with minimal latency and maximum efficiency.

Continuous Cooling: The Lifesaving System

Because servers generate heat constantly, cooling systems must also operate continuously. If cooling stops, temperatures can rise quickly, forcing equipment to reduce performance or shut down to protect itself. Cooling in a data center is not about comfort; it is about equipment survival.

Modern data centers employ a variety of cooling technologies, including chilled water systems, air cooling, and even liquid cooling in some advanced facilities. The choice of cooling method depends on factors such as climate, energy costs, and the scale of the data center. These systems are designed to maintain optimal temperature ranges, typically between 18°C and 27°C, to ensure the longevity and performance of server hardware.

Continuous Operation: The Foundation of Reliability

Data centers are designed for uptime. Maintenance, equipment failures, and repairs must occur without interrupting operation. This requirement is what drives the heavy use of redundancy throughout both mechanical and electrical systems.

Redundancy in data centers is not just a design choice; it is a necessity. Every critical component, from power distribution units to cooling systems, is duplicated to ensure that there is no single point of failure. This level of redundancy is what allows data centers to maintain 99.999% uptime, a standard that is critical for businesses relying on cloud services and real-time data processing.

The Three Pillars of Data Center Infrastructure

Although data centers appear complex, most of the infrastructure falls into three major system groups. The first group is electrical systems. Electrical infrastructure brings power into the building and distributes it safely to server equipment. This includes utility connections, switch gear, backup power systems, and power distribution equipment. The goal is simple: power must always be available.

The second group is mechanical cooling systems. Mechanical systems remove the heat generated by servers. Depending on the facility, this may include chillers, cooling towers, pumps, air handling units, or specialized cooling equipment located directly at the server racks. The objective is to keep equipment operating within safe temperature ranges at all times.

The third group is controls and monitoring systems. Controls tie electrical and mechanical systems together into one operating environment. These systems monitor temperatures, power usage, equipment status, and alarms, allowing the facility to automatically respond to changing conditions or equipment failures. In modern data centers, these systems are often integrated with artificial intelligence and machine learning algorithms to optimize performance and predict potential failures before they occur.

Conversations (0)