Elon Musk's latest announcement has ignited a firestorm of speculation and analysis within the tech and AI communities, as the visionary entrepreneur unveils a radical new initiative that could redefine the boundaries of artificial intelligence and automation. The project, dubbed Macrohard, is a joint venture between Tesla and XAI, a cutting-edge AI research company under Musk's umbrella, and it promises to deliver a groundbreaking system that can emulate the operations of entire enterprises in real-time.

What is Macrohard?

Macrohard represents a bold leap forward in AI capabilities, combining the computational power of Tesla's AI4 chip with the advanced neural networks of XAI's infrastructure. The system, known as Grock, is designed to function as a sophisticated digital assistant, capable of processing and executing complex tasks by analyzing real-time data from computer screens and user interactions. This is akin to a digital optimus, a term that evokes the concept of an AI-driven system that operates with the efficiency and adaptability of a human mind, albeit with far greater speed and precision.

Key Takeaways

- Macrohard is a joint Tesla-XAI project that aims to create a real-time smart AI system capable of emulating entire companies.

- Grock functions as a digital assistant, processing real-time data and executing tasks with the efficiency of a human mind.

- The system will leverage Tesla's AI4 chip and XAI's advanced hardware to deliver unprecedented performance in AI operations.

Elon Musk's vision for Macrohard is rooted in the idea of creating a system that can perform tasks traditionally reserved for human employees, such as managing workflows, analyzing data, and making decisions in real-time. This is a significant departure from current AI applications, which are often limited to specific, narrow tasks. The potential impact of such a system on industries ranging from finance to logistics is profound, as it could drastically reduce operational costs and increase productivity.

Technical Breakdown and Implications

The Macrohard project is built on the foundation of Grock, a system that operates on a dual-processing model. This model divides the AI's cognitive functions into two distinct modes: System One, which represents the instinctive, rapid decision-making processes, and System Two, which encompasses the deliberate, analytical thinking. This architecture is inspired by the human brain's dual-process theory, where quick, intuitive responses are balanced by slower, more methodical reasoning.

Grock is designed to run efficiently on Tesla's AI4 chip, which is significantly more cost-effective than the high-end hardware typically used in AI systems. This cost efficiency, combined with the advanced capabilities of XAI's neural networks, positions Macrohard as a unique solution in the AI landscape. The system's ability to process and act on real-time data from computer screens and user inputs marks a significant advancement in the field of AI-driven automation.

One of the most intriguing aspects of Macrohard is its potential to revolutionize the way businesses operate. By enabling real-time decision-making and task execution, the system could streamline processes that are currently labor-intensive and time-consuming. This could lead to a paradigm shift in industries that rely heavily on human oversight, as Macrohard could potentially replace or augment many of these roles with its advanced capabilities.

Deployment and Future Prospects

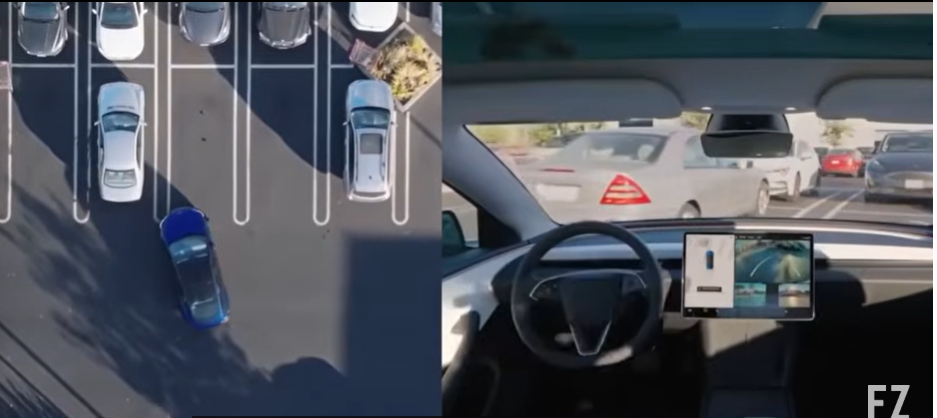

Elon Musk has outlined an ambitious deployment plan for Macrohard, including the integration of digital Optimus units into Tesla vehicles and the strategic placement of these units at supercharger stations equipped with 7 GW of power. This infrastructure will support the system's operations, ensuring that it can function at peak efficiency across a wide range of applications.

Additionally, the system's ability to operate within Tesla vehicles means that users could have a personal AI assistant that can perform office tasks while the vehicle is in motion or parked. This integration highlights the potential for Macrohard to seamlessly blend into everyday life, offering productivity enhancements that were previously unimaginable.

As the Macrohard project progresses, it is expected to have a ripple effect across the AI and tech industries. The successful implementation of this system could set new standards for AI capabilities, influencing the development of future technologies and redefining the role of AI in both corporate and personal environments.

While the full extent of Macrohard's capabilities remains to be seen, the project's potential to transform industries and redefine the landscape of AI is undeniable. As the world continues to embrace automation and intelligent systems, the implications of Macrohard could shape the future of technology and business in ways that are yet to be fully realized.

Elon Musk's Macrohard project is not just a technological advancement; it is a potential paradigm shift in the way businesses and individuals interact with AI. The integration of such a powerful system into everyday life could lead to a new era of productivity and efficiency, where AI-driven assistants manage complex tasks with remarkable precision. As the project moves forward, the tech community will be watching closely to see how this vision translates into real-world applications and what impact it will have on the global economy and society at large.

[[IMG_1]]

Conversations (0)